Every time you feel like an algorithm is watching you, judging you, and quietly determining your life options based on data you can't see or control, you're experiencing something that would be instantly familiar to a 17th-century London merchant. The only difference is that his version was run by humans with ledger books instead of computers with machine learning models.

The modern anxiety about being "scored" by invisible systems isn't a technology problem — it's a human institution that's been refining itself for over 400 years. And we've always hated it for exactly the same reasons.

The Birth of Invisible Scoring

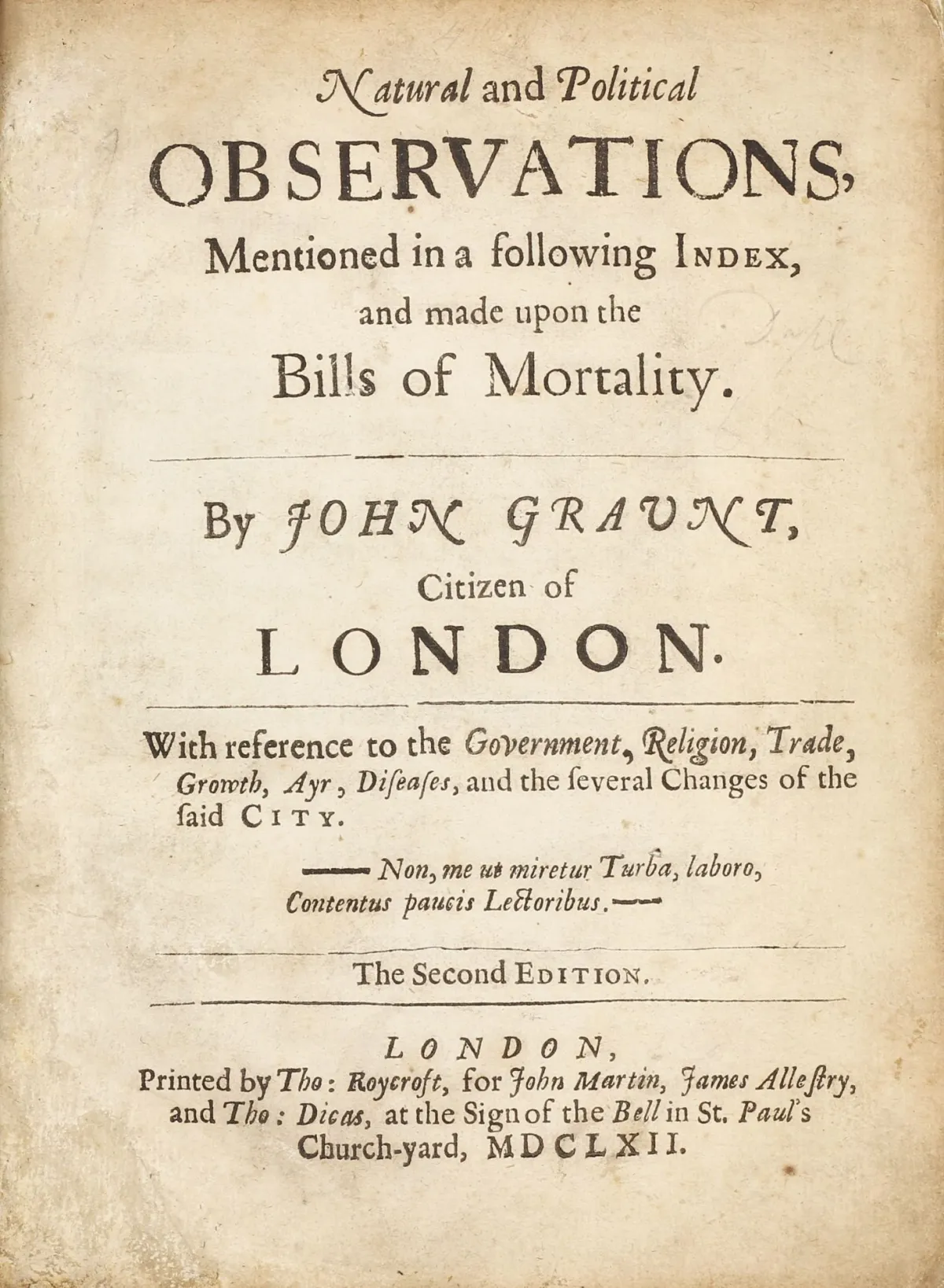

In 1666, the same year London burned down, a clerk named John Graunt published something called "Natural and Political Observations Made upon the Bills of Mortality." Sounds boring, right? It was actually the first systematic attempt to predict human behavior using data patterns.

Photo: John Graunt, via www.milestone-books.de

Photo: John Graunt, via www.milestone-books.de

Graunt had figured out that you could look at birth records, death records, and marriage records, crunch some numbers, and predict with startling accuracy who was likely to die young, default on debts, or cause trouble. Insurance companies immediately saw the potential. Within a decade, they were using Graunt's methods to decide who got coverage and at what price.

The psychological impact was immediate and familiar: ordinary people suddenly felt like they were being watched and judged by criteria they couldn't understand or influence. Sound like anything you've experienced lately?

Coffee Houses and Credit Networks

By 1700, London's coffee houses had developed the world's first informal credit scoring network. Merchants would gather to trade not just goods, but information about who paid their bills, who could be trusted with loans, and who had a reputation for reliability.

These weren't formal institutions — just groups of guys drinking coffee and gossiping about business. But the psychological effect was profound. Your reputation, condensed into whispered assessments and shared stories, could determine whether you got credit, partnerships, or even basic respect.

The system was completely opaque. Nobody published the criteria. There was no appeals process. You could wake up one morning and discover that some rumor or misunderstanding had tanked your standing in the merchant community, and you'd have no idea why or how to fix it.

Modern research on social anxiety shows why this was so psychologically damaging. Humans are wired to understand the rules of their social environment. When those rules become invisible and uncontrollable, it triggers the same stress response as physical danger.

The Guild Algorithm

Medieval guilds had perfected an even more sophisticated version of algorithmic scoring centuries earlier. Guild masters maintained detailed records of apprentice performance, journeyman reliability, and master craftsman quality. These records determined everything from work assignments to marriage prospects.

Photo: Medieval guilds, via www.thoughtco.com

Photo: Medieval guilds, via www.thoughtco.com

The guild system was essentially a machine learning algorithm run by humans. Masters would track dozens of variables — punctuality, skill development, social connections, family background — and use pattern recognition to predict who would succeed in the trade.

Like modern algorithms, the guild system was both incredibly accurate and completely unfair. It could predict professional success with remarkable precision, but it also perpetuated existing inequalities by encoding historical biases into its decision-making process.

Insurance and the Mathematics of Mistrust

By the 1800s, insurance companies had industrialized the process of human scoring. Actuaries — basically 19th-century data scientists — developed increasingly sophisticated ways to categorize risk and assign premiums.

This is where things get psychologically interesting. Insurance companies discovered that you didn't need to know anything about an individual person to predict their behavior. You just needed to know which categories they belonged to — age, occupation, neighborhood, family history — and you could calculate their risk profile with mathematical precision.

The result was a system that felt deeply personal (your rates, your coverage, your risk) but was actually completely impersonal (based entirely on statistical categories). This cognitive dissonance — feeling individually judged by a system that doesn't actually see you as an individual — is the same psychological tension that drives modern algorithm anxiety.

The Digitization of Ancient Anxiety

What Silicon Valley did wasn't revolutionary — it was evolutionary. Credit scores, recommendation engines, and risk algorithms are just digitized versions of scoring systems that humans have been developing and refining for centuries.

The psychological experience is identical: the feeling of being invisibly observed, mysteriously categorized, and algorithmically sorted based on criteria you can't fully understand or control. The only difference is speed and scale.

A 17th-century merchant might spend years building or destroying his reputation in London's coffee house network. Your credit score can change overnight based on automated decisions made by computers you'll never see.

Why We've Always Hated It

The historical record shows that every generation has complained about scoring systems using almost identical language. 18th-century merchants complained that coffee house gossip networks were unfair and opaque. 19th-century workers complained that guild rankings were biased and unchangeable. 20th-century consumers complained that credit agencies had too much power and too little accountability.

The complaints haven't changed because the underlying psychological problem hasn't changed. Humans need to feel some control over their social and economic environment. Scoring systems — whether run by coffee house gossips or machine learning algorithms — create the opposite feeling: that you're being judged by forces you can't influence or even fully understand.

Cognitive research on learned helplessness explains why this is so psychologically damaging. When people feel like their outcomes are determined by invisible, uncontrollable forces, it triggers depression, anxiety, and social withdrawal — the same symptoms that modern "algorithm anxiety" produces.

The Eternal Cycle

Here's what 400 years of scoring system evolution reveals: every generation thinks their version is uniquely invasive and unfair, and every generation is basically right. Each iteration has been more sophisticated, more pervasive, and more psychologically challenging than the last.

But the basic human response has remained constant: we adapt to the system, complain about its unfairness, and then build an even more sophisticated version for the next generation to complain about.

The coffee house merchants who invented informal credit networks probably had no idea they were creating the psychological template for modern algorithm anxiety. But they were responding to the same fundamental human need: the desire to predict and control risk in an uncertain world.

Your credit score isn't a modern invention — it's the latest iteration of a very old human institution. And your feelings about it aren't a response to new technology — they're the same psychological reactions that have been documented for centuries.

The only real difference is that now we have the historical data to recognize the pattern.